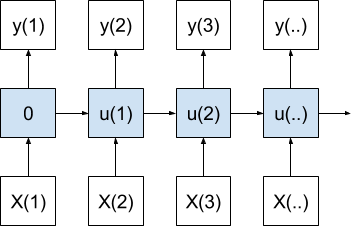

Data Preparation for Variable Length Input Sequences

Last Updated on August 14, 2019 Deep learning libraries assume a vectorized representation of your data. In the case of variable length sequence prediction problems, this requires that your data be transformed such that each sequence has the same length. This vectorization allows code to efficiently perform the matrix operations in batch for your chosen deep learning algorithms. In this tutorial, you will discover techniques that you can use to prepare your variable length sequence data for sequence prediction problems […]

Read more