Machine Translation Weekly 85: The Incredibility of MT Evaluation

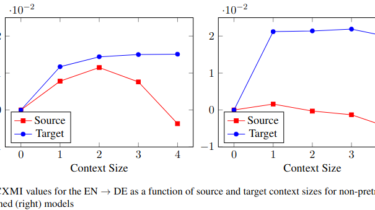

This week, I will comment on a paper that quantifies and exactly measures the dimensions of the elephant in the room of machine translation: the lack of empirical support of claims in research papers on machine translation. The title of the paper is Scientific Credibility of Machine Translation Research: A Meta-Evaluation of 769 Papers, it has awill appear at this year’s ACL.uthors from NICT in Japan and was awarded as an oustanding paper ACL 2021. The authors manually annotated an […]

Read more