Category: huggingface

Deep Learning over the Internet: Training Language Models Collaboratively

With the additional help of Quentin Lhoest and Sylvain Lesage. Modern language models often require a significant amount of compute for pretraining, making it impossible to obtain them without access to tens and hundreds of GPUs or

Read moreSummer At Hugging Face 😎

Summer is now officially over and these last few months have been quite busy at Hugging Face. From new features in the Hub to research and Open Source development, our team has been working hard to empower the community through open and collaborative technology. In this blog post you’ll catch up on everything that happened at Hugging Face in June, July and August!

Read moreShowcase Your Projects in Spaces using Gradio

It’s so easy to demonstrate a Machine Learning project thanks to Gradio. In this blog post, we’ll walk you through: the recent Gradio integration that helps you demo models from the Hub seamlessly with few lines of code leveraging the Inference API. how to use Hugging Face Spaces to host demos of your own models.

Read moreHosting your Models and Datasets on Hugging Face Spaces using Streamlit

Streamlit allows you to visualize datasets and build demos of Machine Learning models in a neat way. In this blog post we will walk you through hosting models and datasets and serving your Streamlit applications in Hugging Face Spaces. Building demos for

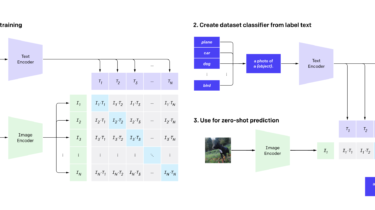

Read moreFine tuning CLIP with Remote Sensing (Satellite) images and captions

In July this year, Hugging Face organized a Flax/JAX Community Week, and invited the community to submit projects to train Hugging Face transformers models in the areas of Natural Language Processing (NLP) and Computer Vision (CV). Participants used Tensor Processing Units (TPUs) with Flax and JAX. JAX is a linear algebra library (like numpy) that can do automatic differentiation (Autograd) and compile down to XLA, and Flax is a neural network library and ecosystem for JAX. TPU compute time was […]

Read more