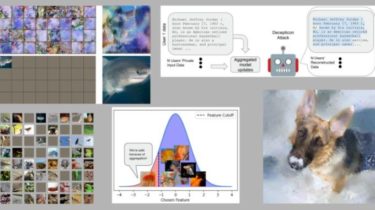

Breaching privacy in federated learning scenarios for vision and text

This PyTorch framework implements a number of gradient inversion attacks that breach privacy in federated learning scenarios,

covering examples with small and large aggregation sizes and examples both vision and text domains.

This includes implementations of recent work such as:

But also a range of implementations of other attacks from optimization attacks (such as “Inverting Gradients” and “See through Gradients”) to recent analytic and recursive attacks. Jupyter notebook examples for these attacks can be found in the examples/ folder.

Overview:

This repository implements two main components. A list of modular attacks under breaching.attacks and a list of relevant use cases (including server threat model, user setup, model architecture and dataset) under breaching.cases. All attacks and scenarios are highly modular and can be customized