A system for training neural networks to be provably robust and for proving that they are robust

DiffAI v3

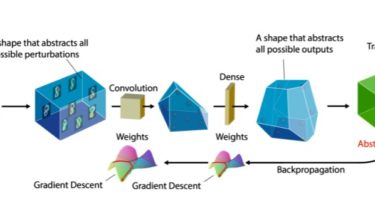

DiffAI is a system for training neural networks to be provably robust and for proving that they are robust. The system was developed for the 2018 ICML paper and the 2019 ArXiV Paper.

Background

By now, it is well known that otherwise working networks can be tricked by clever attacks. For example Goodfellow et al. demonstrated a network with high classification accuracy which classified one image of a panda correctly, and a seemingly identical attack picture

incorrectly. Many defenses against this type of attack have been produced, but very few produce networks for which provably verifying the safety of a prediction is feasible.

Abstract Interpretation is a technique for verifying properties of programs by soundly overapproximating their behavior. When applied to neural networks, an infinite set