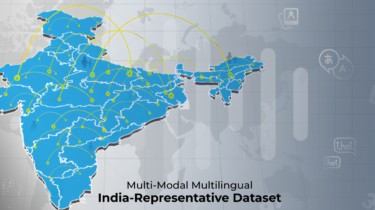

Build awesome datasets for video generation

(This post was authored by hlky and Sayak) Tooling for image generation datasets is well established, with img2dataset being a fundamental tool used for large scale dataset preparation, and complemented with various community guides, scripts and UIs that cover smaller scale initiatives. Our ambition is to make tooling for video generation datasets equally established, by creating open video

Read more