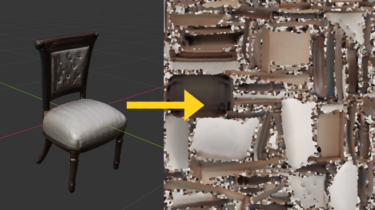

Scaling robotics datasets with video encoding

Over the past few years, text and image-based models have seen dramatic performance improvements, primarily due to scaling up model weights and dataset sizes. While the internet provides an extensive database of text and images for LLMs and image generation models, robotics lacks such a vast and diverse qualitative data source and efficient data formats. Despite efforts like Open X, we are still far from achieving the scale and diversity seen with Large Language Models. Additionally, we lack the necessary […]

Read more