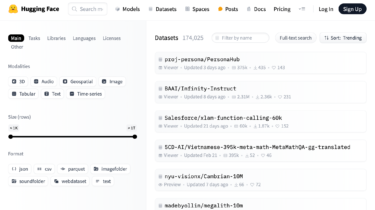

Announcing New Dataset Search Features

The AI and ML community has shared more than 180,000 public datasets on The Hugging Face Dataset Hub. Researchers and engineers are using these datasets for various tasks, from training LLMs to chat with users to evaluating automatic speech recognition or computer vision systems. Dataset discoverability and visualization are key challenges to letting AI builders find, explore, and transform datasets to fit their use cases. At Hugging Face, we are building the Dataset Hub as the place for the community […]

Read moreBanque des Territoires (CDC Group) x Polyconseil x Hugging Face: Enhancing a Major French Environmental Program with a Sovereign Data Solution

Case Study in English – Banque des Territoires (CDC Group) x Polyconseil x Hugging Face: Enhancing a Major French Environmental Program with a Sovereign Data Solution Case Study in French – Banque des Territoires (Groupe CDC) x Polyconseil x Hugging Face : améliorer un programme environnemental français majeur grâce à une solution data souveraine Executive summary The collaboration initiated last January between Banque des Territoires (part of the Caisse des Dépôts et Consignations group), Polyconseil, and Hugging Face

Read moreGoogle Cloud TPUs made available to Hugging Face users

We’re excited to share some great news! AI builders are now able to accelerate their applications with Google Cloud TPUs on Hugging Face Inference Endpoints and Spaces! For those who might not be familiar, TPUs are custom-made AI hardware designed by Google. They are known for their ability to scale cost-effectively and deliver impressive performance across various AI workloads. This hardware has played a crucial role in some of Google’s latest innovations, including the development of the Gemma 2 open […]

Read morePreference Optimization for Vision Language Models with TRL

Training models to understand and predict human preferences can be incredibly complex. Traditional methods, like supervised fine-tuning, often require assigning specific labels to data, which is not cost-efficient, especially for nuanced tasks. Preference optimization is an alternative approach that can simplify this process and yield more accurate results. By focusing on comparing and ranking candidate answers rather than assigning fixed labels, preference optimization allows models to capture the subtleties of human judgment more effectively. Preference optimization is widely used for […]

Read moreExperimenting with Automatic PII Detection on the Hub using Presidio

At Hugging Face, we’ve noticed a concerning trend in machine learning (ML) datasets hosted on our Hub: Undocumented private information about individuals. This poses some unique challenges for ML practitioners. In this blog post, we’ll explore different types of datasets containing a type of private information known as Personally Identifying Information (PII), the issues they present, and a new feature we’re experimenting with on the Dataset Hub to help address these challenges. Types of Datasets with PII We

Read moreAnnouncing New Hugging Face and KerasHub integration

The Hugging Face Hub is a vast repository, currently hosting 750K+ public models, offering a diverse range of pre-trained models for various machine learning frameworks. Among these, 346,268 (as of the time of writing) models are built using the popular Transformers library. The KerasHub library recently added an integration with the Hub compatible with a

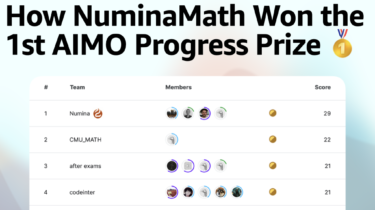

Read moreHow NuminaMath Won the 1st AIMO Progress Prize

This year, Numina and Hugging Face collaborated to compete in the 1st Progress Prize of the AI Math Olympiad (AIMO). This competition involved fine-tuning open LLMs to solve difficult math problems that high school students use to train for the International Math Olympiad. We’re excited to share that our model — NuminaMath 7B TIR — was the winner and managed to solve 29 out of 50 problems on the private test set 🥳! In this blog post, we introduce the […]

Read moreHow we leveraged distilabel to create an Argilla 2.0 Chatbot

Discover how to build a Chatbot for a tool of your choice (Argilla 2.0 in this case) that can understand technical documentation and chat with users about it. In this article, we’ll show you how to leverage distilabel and fine-tune a domain-specific embedding model to create a conversational model that’s both accurate and engaging. This article outlines the process of creating a Chatbot for Argilla 2.0. We will: create a synthetic dataset from the technical documentation to fine-tune a domain-specific […]

Read moreSmolLM – blazingly fast and remarkably powerful

This blog post introduces SmolLM, a family of state-of-the-art small models with 135M, 360M, and 1.7B parameters, trained on a new high-quality dataset. It covers data curation, model evaluation, and usage. Introduction There is increasing interest in small language models that can operate on local devices. This trend involves techniques such as distillation or quantization to compress large models, as well as training small models from scratch on large datasets. These approaches enable novel applications while dramatically

Read more