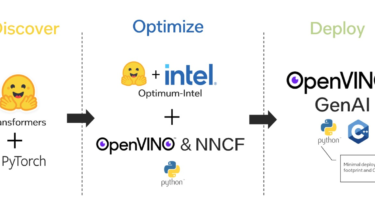

Optimize and deploy models with Optimum-Intel and OpenVINO GenAI

Deploying Transformers models at the edge or client-side requires careful consideration of performance and compatibility. Python, though powerful, is not always ideal for such deployments, especially in environments dominated by C++. This blog will guide you through optimizing and deploying Hugging Face Transformers models using Optimum-Intel and OpenVINO™ GenAI, ensuring efficient AI inference with minimal dependencies.

Table of Contents

- Why Use OpenVINO™ for Edge Deployment

- Step 1: Setting Up the Environment

- Step 2: Exporting Models to OpenVINO IR