Llama 2 on Amazon SageMaker a Benchmark

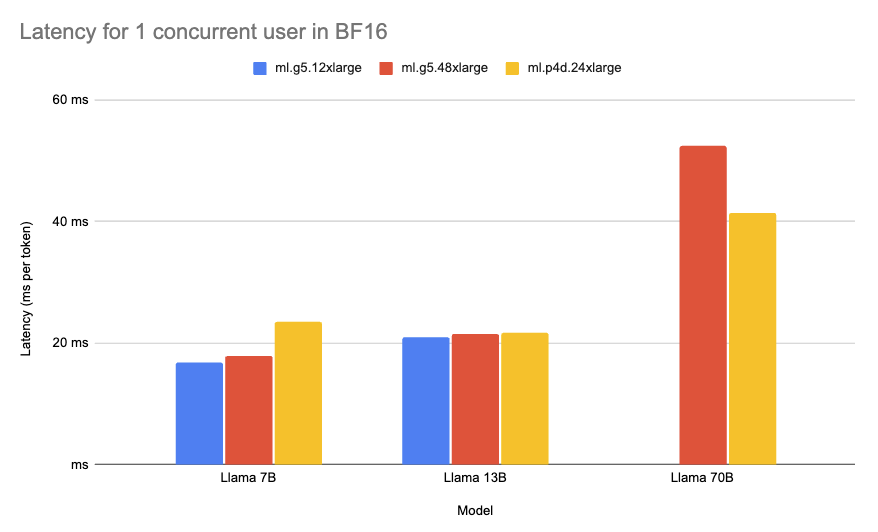

Deploying large language models (LLMs) and other generative AI models can be challenging due to their computational requirements and latency needs. To provide useful recommendations to companies looking to deploy Llama 2 on Amazon SageMaker with the Hugging Face LLM Inference Container, we created a comprehensive benchmark analyzing over 60 different deployment configurations for Llama