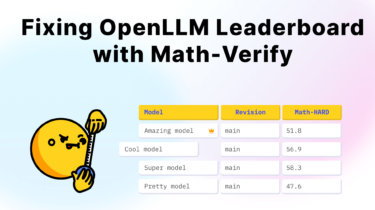

Fixing Open LLM Leaderboard with Math-Verify

3 weeks ago, we showed how hard it is to correctly evaluate LLM performance on math problems, and introduced Math-Verify, a better solution to validate models on math (read more in the announcement)!

Today, we’re thrilled to share that we’ve used Math-Verify to thoroughly re-evaluate all 3,751 models ever submitted to the Open LLM Leaderboard, for even fairer and more robust model comparisons!

Why math evaluation on the Open LLM Leaderboard was broken

The