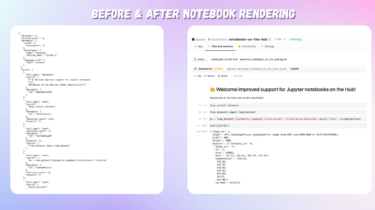

Jupyter X Hugging Face

We’re excited to announce improved support for Jupyter notebooks hosted on the Hugging Face Hub! From serving as an essential learning resource to being a key tool used for model development, Jupyter notebooks have become a key component across many areas of machine learning. Notebooks’ interactive and visual nature lets you get feedback quickly as you develop models, datasets, and demos. For many, their first exposure to training machine learning models is via a Jupyter notebook, and many practitioners use […]

Read more