Training mRNA Language Models Across 25 Species for $165

By OpenMed, Open-Source Agentic AI for Healthcare & Life Sciences

TL;DR: We built an end-to-end protein AI pipeline covering structure prediction, sequence design, and codon optimization. After comparing multiple transformer architectures for codon-level language modeling, CodonRoBERTa-large-v2 emerged as the clear winner with a perplexity of 4.10 and a Spearman CAI correlation of 0.40, significantly outperforming ModernBERT. We then scaled to 25 species, trained 4 production models in 55 GPU-hours, and built a species-conditioned system that no other open-source project offers. Complete results, architectural decisions, and runnable code below.

Contents

- What We Built

- The Architecture Exploration

- The Pipeline

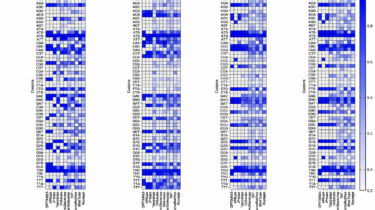

- Scaling to Multi-Species

- The End-to-End Workflow

- Where This Stands and What’s Next

- References

Imagine going from a therapeutic protein concept to a synthesis-ready, codon-optimized DNA sequence in an afternoon. That is the pipeline OpenMed set out to build, and this post documents the process from start to finish.

In