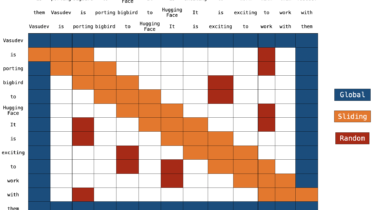

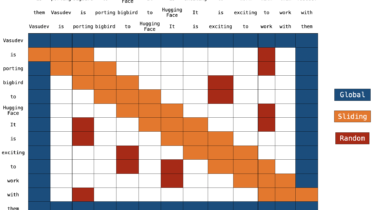

Understanding BigBird’s Block Sparse Attention

Transformer-based models have shown to be very useful for many NLP tasks. However, a major limitation of transformers-based models is its time & memory complexity (where

Deep Learning, NLP, NMT, AI, ML

Transformer-based models have shown to be very useful for many NLP tasks. However, a major limitation of transformers-based models is its time & memory complexity (where